UAB Research Finds Security Risks with Computer Created Voices

What if someone could take a recording of your voice and use it to create a digital version? In other words, he or she could use a computer to make it sound like you’re saying anything. A few weeks ago, the Canadian startup Lyrebird released a computer-generated conversation between some familiar voices.

It’s not the most natural-sounding, but you can probably tell the voices mimic Presidents Donald Trump, Barack Obama, and former Secretary of State Hilary Clinton. The technology has applications beyond parlor tricks. It’s also raising security concerns. That’s because we use our voices to identify ourselves.

Digital assistants such as Apple’s Siri or Amazon’s Alexa are designed to recognize a specific voice. Otherwise anyone shouting “Hey, Siri” could unlock any phone. Beyond that, some banks are now using voice recognition software to allow customers to access financial information by phone. Your voice essentially becomes a password.

But perhaps it’s not a very good password if software can now mimic your voice. Think about how many voices could be collected from YouTube videos or surreptitiously recorded with a smart phone.

So a team with UAB’s SPIES research group, which looks at emerging security and privacy issues, tackled a key question: is this type of security vulnerable to impersonation?

The study looked at two things. First, would a computer recognize and thus reject the fake voice? Turns out, according to this study, the synthesized voice fooled the computer more than 80 percent of the time.

“It’s not secure at all,” says Maliheh Shirvanian, who was part of the research team.

The second part of the study tested humans. Could they tell the difference between a real and fake voice? Test subjects listened to clips of Morgan Freeman and one like this.

Or maybe this…

That’s a fake Oprah Winfrey followed by her real voice.

People got it right only about half the time. Better than the computer but still no better than chance.

When this study came out in 2015, it raised a lot of alarms within the tech community. But Dan Miller says that’s overblown. He’s lead analyst with the firm Opus Research and he follows voice technology. Miller explains there are good procedures and technology in place to prevent fraud. He says outside the lab, banks he’s worked with can reduce that false acceptance rate to less than 2 percent.

“There is always a view that you can defeat these things and indeed you can,” says Miller. “But the safeguards against them being defeated are developing as rapidly as the methods to defeat them.”

Miller says every type of password has vulnerabilities. That’s why security experts recommend multi-factor identification where you need more than just one type of password to access something.

UAB’s Maliheh Shirvanian says the study from 2015 tested speech algorithms. They expect to release a new study in the coming months that tests actual apps which use speech recognition. It’ll be a measure of how quickly the technology is advancing, both to fake voices and to fight the fakes.

Alabama’s racial, ethnic health disparities are ‘more severe’ than other states, report says

Data from the Commonwealth Fund show that the quality of care people receive and their health outcomes worsened because of the COVID-19 pandemic.

What’s your favorite thing about Alabama?

That's the question we put to those at our recent News and Brews community pop-ups at Hop City and Saturn in Birmingham.

Q&A: A former New Orleans police chief says it’s time the U.S. changes its marijuana policy

Ronal Serpas is one of 32 law enforcement leaders who signed a letter sent to President Biden in support of moving marijuana to a Schedule III drug.

How food stamps could play a key role in fixing Jackson’s broken water system

JXN Water's affordability plan aims to raise much-needed revenue while offering discounts to customers in need, but it is currently tied up in court.

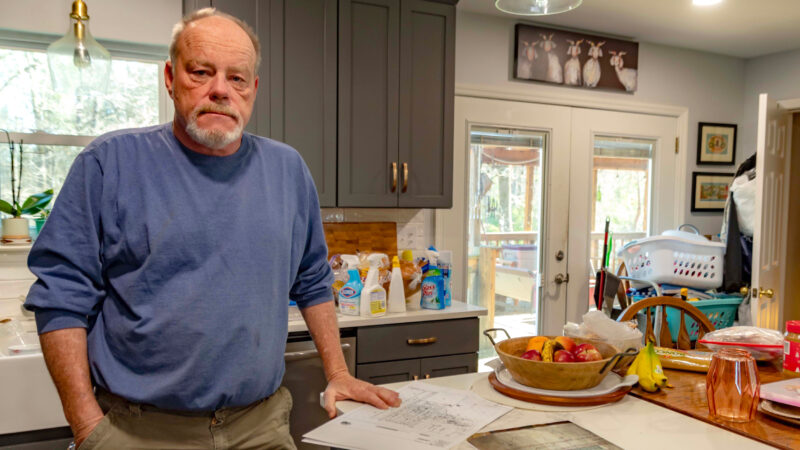

Alabama mine cited for federal safety violations since home explosion led to grandfather’s death, grandson’s injuries

Following a home explosion that killed one and critically injured another, residents want to know more about the mine under their community. So far, their questions have largely gone unanswered.

Crawfish prices are finally dropping, but farmers and fishers are still struggling

Last year’s devastating drought in Louisiana killed off large crops of crawfish, leading to a tough season for farmers, fishers and seafood lovers.